In a bold move that's reshaping the AI landscape, Elon Musk's xAI has announced the completion of Colossus, billed as the world's largest single AI training supercomputer. Operational as of November 19, 2024, this behemoth packs 100,000 Nvidia H100 GPUs into a single cluster, constructed in an astonishing 122 days in Memphis, Tennessee. The reveal, shared by Musk on X (formerly Twitter), underscores xAI's aggressive push to challenge industry leaders like OpenAI, Google, and Anthropic in the race for artificial general intelligence (AGI).

The Birth of Colossus: Speed and Scale

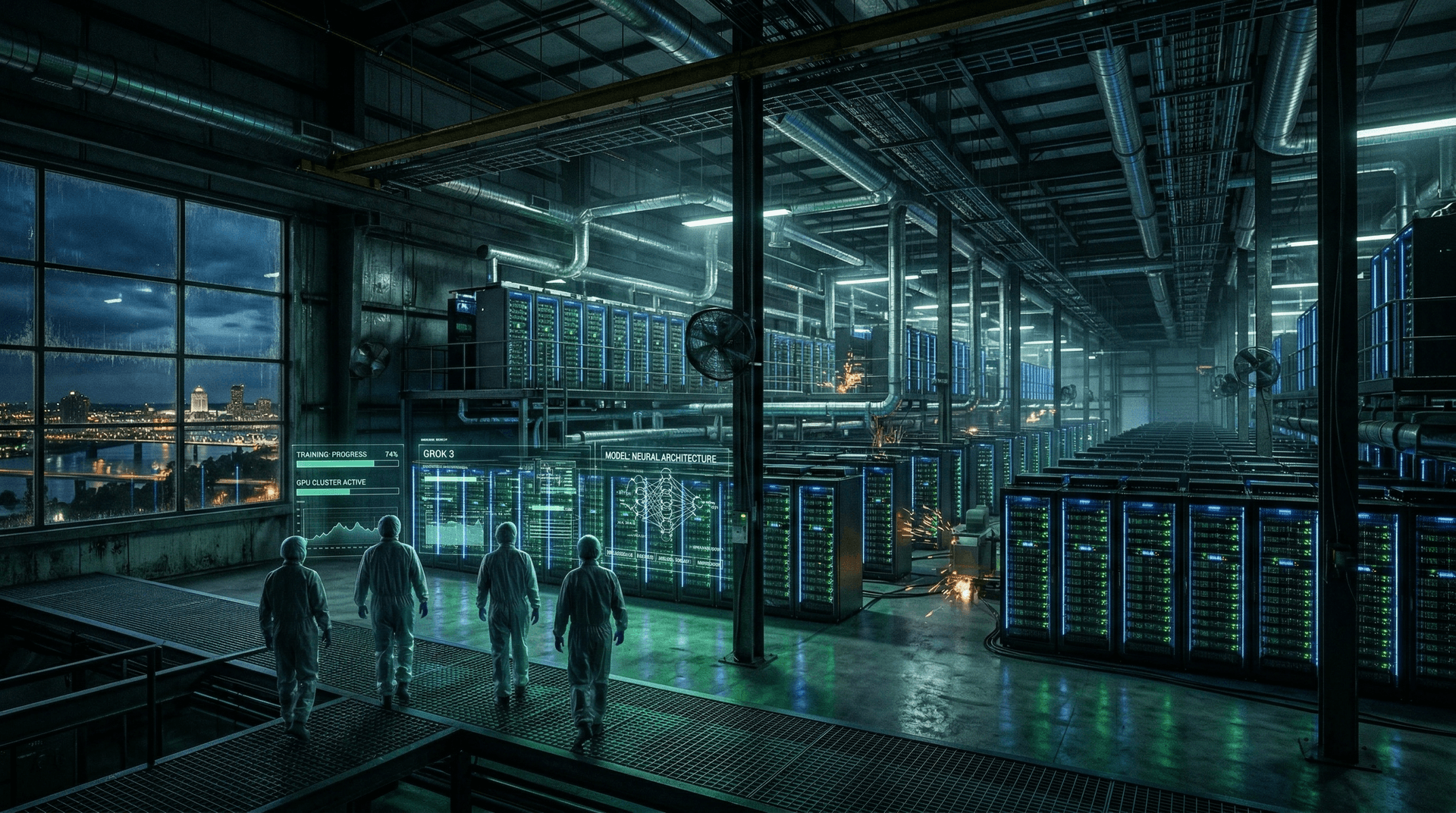

What sets Colossus apart isn't just its size but the sheer velocity of its deployment. xAI transformed a former Electrolux facility into a cutting-edge data center, installing the GPUs at a rate of roughly 1,000 per day toward the end. Powered by a massive electrical infrastructure capable of delivering up to 150 megawatts—enough to light up a small city—the cluster is already humming with activity, training the next iteration of xAI's flagship model, Grok 3.

Musk highlighted the achievement in a post: "Colossus 1 has 100k H100s. Built in 122 days. Already training Grok 3. World’s most powerful AI training system by a factor of 3x (if you consider liquid-cooled H100s). Next gen will be 200k H100s expanding to 1 million H100s/H200s/B200s/etc."

This isn't hyperbole. Independent analyses confirm Colossus eclipses competitors. For context, Meta's largest cluster tops out at around 24,000 GPUs, while OpenAI's rumored setups for GPT-5 are believed to be smaller in single-cluster terms. xAI's feat relied on partnerships with Nvidia, Dell, and Supermicro for hardware, and strategic sourcing of power from the Tennessee Valley Authority (TVA).

Fueling Grok 3: A Leap Toward AGI

Colossus's primary mission is to train Grok 3, expected by the end of 2024. Grok, xAI's conversational AI inspired by the Hitchhiker's Guide to the Galaxy, has evolved rapidly since its 2023 debut. Grok 2, released in August 2024, already competes with top models like GPT-4o and Claude 3.5 Sonnet on benchmarks. Grok 3 promises even greater capabilities, with Musk claiming it will be "the world's most powerful AI by every metric by Dec this year."

The training process leverages xAI's unique approach: massive compute paired with real-time data from X's 500 million+ users. Unlike rivals constrained by safety guardrails, Grok emphasizes "maximum truth-seeking" with minimal censorship, aligning with Musk's vision of uncensored AI.

| Feature | Colossus | Competitors | |---------|----------|-------------| | GPUs | 100,000 H100 | Meta: 24k; OpenAI: ~10k-50k est. | | Build Time | 122 days | 1-2 years typical | | Power | 150MW+ | 50-100MW | | Purpose | Grok 3 training | Various models |

The AI Arms Race Heats Up

Colossus arrives amid escalating competition. Post-U.S. election on November 5, 2024, AI infrastructure has surged in priority. President-elect Trump's pro-innovation stance, coupled with Musk's advisory role via the Department of Government Efficiency (DOGE), could streamline regulations for such projects.

xAI isn't alone. Oracle announced a 131,072-GPU cluster for OpenAI in September, but it's multi-site. Microsoft's Stargate project eyes millions of chips by 2028. Yet, xAI's single-cluster density offers advantages in interconnect speed via Nvidia's NVLink, reducing latency in training massive models.

Critics point to environmental costs: 100,000 H100s consume energy equivalent to 100,000 households. xAI counters with efficiency—liquid cooling reduces power needs by 30%—and plans for renewable integration. Cost-wise, estimates peg Colossus at $4-5 billion, funded partly by xAI's May 2024 $6 billion Series B.

Broader Implications for AI Development

This supercomputer lowers barriers for rapid iteration. In AI, compute is king; scaling laws dictate that more FLOPs yield smarter models. Colossus positions xAI to release updates monthly, not yearly, accelerating progress toward AGI.

For Memphis, it's an economic boon: 300+ jobs created, with expansion plans to 1 million GPUs promising thousands more. xAI's choice of the U.S. South avoids coastal energy crunches, tapping industrial power grids.

Challenges loom. Supply chain bottlenecks for H100s/B200s persist, despite Nvidia's ramp-up. Geopolitical tensions over chips add risk. Ethically, unchecked power raises misuse concerns, though xAI prioritizes alignment through diverse training data.

Looking Ahead: Expansion and Beyond

Musk teased immediate doubling to 200,000 GPUs, then scaling to a million mixing H100s, H200s, and Blackwell B200s. This could enable multimodal Grok variants handling video, code, and robotics—hinting at Tesla integration.

As 2024 closes, Colossus symbolizes a paradigm shift: AI development as infrastructure arms race. xAI's speed challenges incumbents, potentially democratizing access via Grok's availability on X. Whether it delivers Grok 3 as promised remains to be seen, but one thing's clear: the future of intelligence is being forged in Memphis, one GPU at a time.

Word count: 912